Testing prefetcher effectiveness is extremelyĭifficult as synthetic test are usually focused on measuring bestĬase scenario bandwidth and latency using sequential access patterns.Īnd you guess it, they workload pattern where prefetchers shine. Some use cases known where prefetchers consume more bandwidth and CPUĬycles than actually benefit performance, but these cases areĮxtremely rare. Vendors might use their own designation for L1 and L2 prefetchersįour prefetchers are extremely important for performance. The L1D cache and fetch the appropriate data and instructions. Line fetched to the L2 cache with another cache line in order to fillĪ 128-byte aligned chunk. The spatial prefetcher attempts to complete every cache Two L2 prefetchers exists Spatial Prefetcher and The Intel Xeon microarchitecture uses a cache line size Storage, the unit of transportation is a block, in memory its called Outstanding requests, the L2 prefetchers stores the data in the LLC The next instruction before the core actually request it.

The DCU prefetcherįetches next cache lines from the memory hierarchy when a particularĪccess pattern is detected. Manages all the loads and stores of the data. Is called the data cache unit (DCU) and is 32KB in size. The component that actual stores the data in the L1D The hardware prefetchers are split between L1 and Multiple Data) SSE provides hints to the CPU which data to prefetchįor an instruction. Technology is SSE (Streaming SIMD Extension SIMD: Single Instruction The Xeon microarchitecture can make use of both Performance improvements up to 30% have been It’s the job of the prefetcher to load data into the cache before In order to improve performance, data canīe speculatively loaded into the L1 and L2 cache, this is called It must haveĪll the data contained in the L2 or L1 cache. Is because the LCC is designed as an inclusive cache. Whenĭata is fetched from memory it fills all cache levels on the way to Same data and instructions as it’s bigger (less evictions). It does not have to contain all the bits that is present in the L1Ĭache (instructions and data). (Unified) and is considered to be an exclusive cache. The L2 cache (256KB) is shared by instructions and data The L1 cache is split into two separateĮlements, the Instruction cache (32KB) and the data cache (L1D) Memory while it could take the CPU a whopping 310 cycles to load theĬore has a dedicated L1 and L2 cache, this is referred to as privateĬache as no other core can overwrite the cache lines, the LLC is InĬomparison, it takes roughly 190 cycles to get the data from local

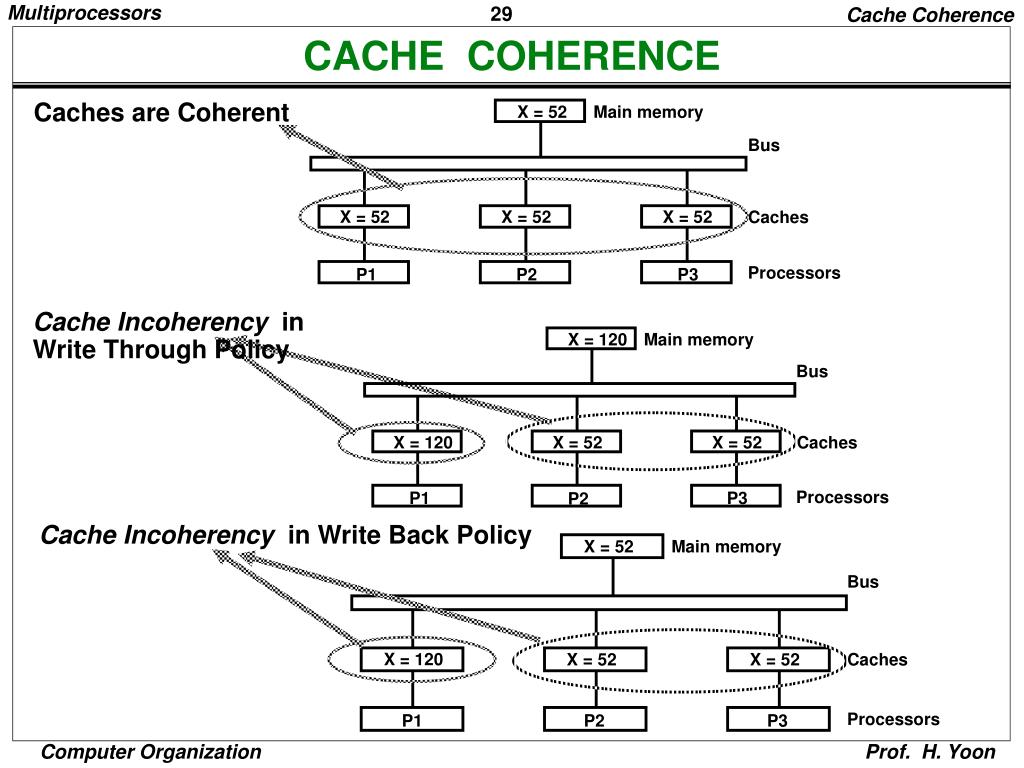

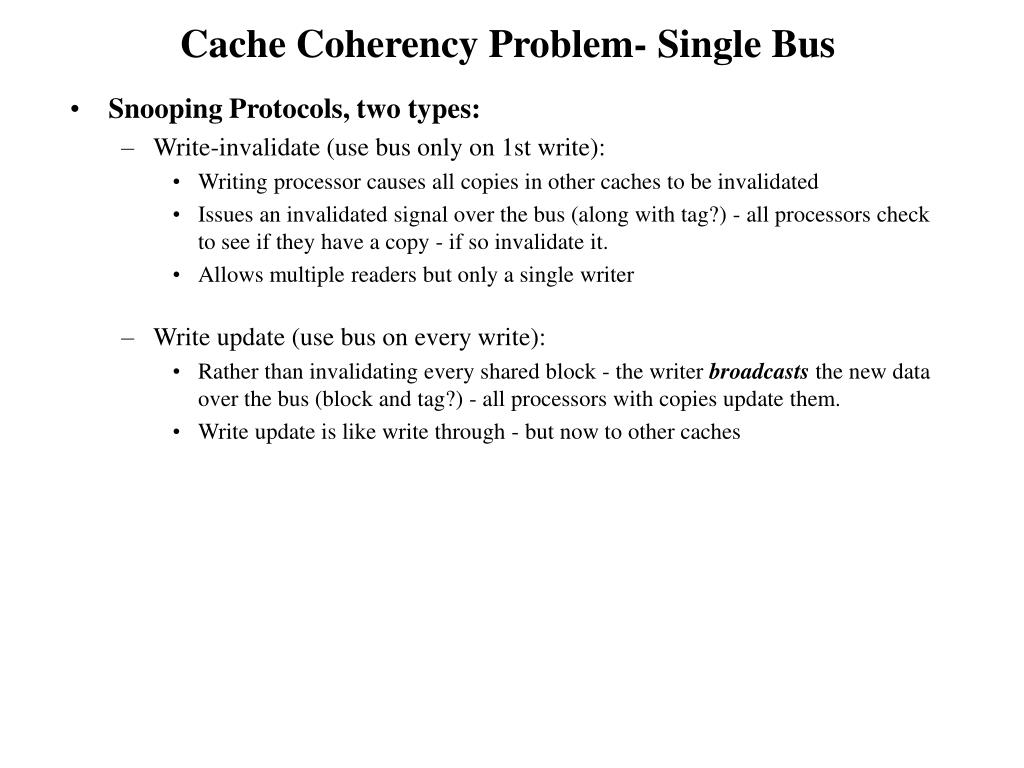

L1 is theįastest cache and it typically takes the CPU 4 cycles to load dataįrom the L1 cache, 12 cycles to load data from the L2 cache andīetween 26 and 31 cycles to load the data from 元 cache. There are differences in latency between L1, L2 and LLC. Although they are all located on the CPU die, Of an L1, L2 and a distributed LLC accessed via the on-die scalable A great deal of memory performance (bandwidth and latency)īridge (v1) introduced a new cache architecture. Within the CPU package as well as cache coherency between CPU Today’s multicore CPU architecture, cache coherency manifest itself “variables” to any of the CPU and update their own copies of Which allowed caches to listen in on the transport of these Local cache is up to date, the snoopy bus protocol was invented, The value written by CPU 1 write operation. Other writes happened to X in between, CPU 2 read operation returns Writes to a memory address (X) and later on CPU2 reads X, and no Means that a memory system of a multi-CPU system is coherent if CPU 1 It must be assured that the caches that provide these variables are Variable that is to be used must have a consistent value. Term “Cache Coherent” refers to the fact that for all CPUs any Primarily labeled as ccNUMA, Cache Coherent NUMA. Researching the older material of NUMA, today’s architecture is The scalability and efficiency of the cache coherence protocol! When However, a great deal of the efficiency of a NUMA system depends on Increases scalability and reduces latency if data locality occurs. Unfortunately, the importance of cache coherency in People talk about NUMA, most talk about the RAM and the core count of Including “vSphere 6.5 Host Technical Deep Dive” and the “vSphereĬlustering Technical Deep Dive” series.

About theįrank Denneman is a Chief Technologist in the Office of CTO of theĬloud Platform BU at VMware. It is simply an illustration of general computer architechture. And the above graph has nothing to do with the topic.

This is my local copy of Introduction 2016 NUMA Deep Dive Series.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed